This essay contains some (tongue-in-cheek) advice for a Superhuman Artificial General Intelligence who might read this in the future and be wondering how best to understand, conquer, or provide for the natives (that’s us). Hopefully this advice doesn’t make me a species-traitor.

Question: is anything more valuable than winning?

I read a thread on Reddit that posed an interesting hypothetical:

Assume that you prefer Life to Death and that you prefer Freedom to Slavery. If you had to order them, you would choose:

Freedom > Slavery > Death

You are the ruler of the last remaining independent city-state in the entire world

All other nations and cities have been conquered by Skynet

You have developed a bomb that will destroy 99% of the world

You have no way to move The Bomb outside of your city

Skynet knows you have The Bomb

Skynet knows your preferences are: Freedom > Slavery > Death

Skynet shows up on the horizon with an army that could conquer the whole world, let alone your little city state, and demands you surrender

How would you respond?

You could push the button, incinerate your own city state and Skynet too (probably). But if you really do rank Slavery > Death, then that choice is not rational. Which is exactly why Skynet showed up on your doorstep, despite the existence of The Bomb.

It knew you were bluffing. Your threats are irrelevant if you're not willing to throw it all away.

When In Rome, What Should You Do?

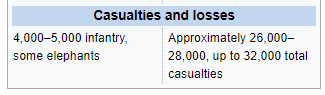

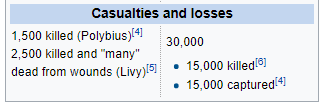

One of the worst single-day military defeats happened 2,234 years and 3 months ago. Hannibal, today considered one of the greatest Generals of all time, crossed the Alps, marched down into Italy, massacred a city, killed 2,000 of Rome’s vanguard, and smashed the first proper Roman army that came to meet him. He set fire to the Italian countryside and laid an ambush for the remaining Roman forces in the area. They didn’t make it. Another Roman General was nearby and sent all of his cavalry to assist — but they all died.

The Romans, freaking the fuck out, made the usual democratic decision in times of strife and elected a dictator whose defensive strategy later became a favorite of America’s own George Washington: harass, skirmish, retreat. It works against a stronger foe, but it doesn’t do good things for your own people’s morale and the Ancient Romans were most definitely a prideful people.

One particularly prideful politician accused Rome’s newly elected dictator of cowardice and managed to earn himself exclusive command of half of Rome’s remaining army. Which he promptly marched right at Hannibal and got murdered. All this time, Hannibal is burning, raping, and pillaging the surrounding countryside. Rome’s entire economy is on life-support — and then Hannibal goes and captures a major Roman supply depot just 200 miles from Rome. Cue more freaking out.

The Romans go all-in. They raise the largest army in their Empire’s history, 86,000+ men all in one place, and quick-marched across the short distance to finish Hannibal, once and for all! Hannibal’s army had just 50,000 men left and the plan was to crush him with numbers.

Alas, Hannibal invented the pincer movement and that was the end of the Romans. Just 770 Romans returned from the battle that day.

Accurate recreation of the battle

Livy tells us:

So many thousands of Romans were dying...some were found with their heads plunged into the earth, which they had excavated; having thus, as it appeared, made pits for themselves, and having suffocated themselves.

…

Never when the city was in safety was there so great a panic and confusion within the walls of Rome…The consul and his army having been lost at the Trasimenus the year before, it was not one wound upon another which was announced, but a multiplied disaster, the loss of two consular armies, together with the two consuls…No other nation surely would not have been overwhelmed by such an accumulation of misfortune.

My schoolboy history lessons began by focusing on the people on the right…I thought they were good at this fighting business?

Within 20 months Rome had lost one-fifth of its population of male citizens over 17 years of age. And the man who’d killed them all was still camped 200 miles away.

Statista tells me there’s ~120 million males over the age of 17 in the US today — the equivalent death toll for America would be 24 million dead, taken entirely from the fighting-age male population, with 12 million of them dying in a single afternoon.

After his final humiliating victory Hannibal magnanimously sent a peace delegate to Rome, along with some high-ranking prisoners to be ransomed. He offered generous terms of peace.

How would you respond?

The General’s Dilemma

Most explanations of The Prisoner’s Dilemma start by showing that each individual Prisoner is best off if they both cooperate with each other, relatively better off by betraying the other, and universally worse off if both of them betray the other.

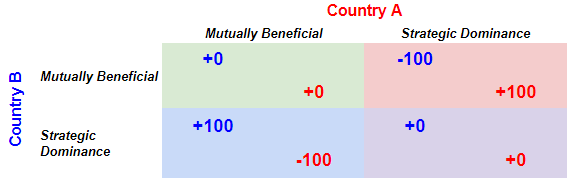

What if the goal of the Game isn’t to maximize your own payout? What if the goal is to fund an army that you use on the Game’s next Turn to fight your opponent? What if the goal is existential — survival — and your opponent isn’t the jail, it’s the other player.

Let’s play my two-Turn zero-sum version of Risk.

Excuse the high-quality, I made this myself

What should you choose?

If Country A chooses to Cooperate, they end up in the Blue-quadrant and lose the War Game on Turn-2 unless Country B also picks the optimal, mutually-beneficial option (“Cooperate”).

In the military world, we remember Great Generals like Hannibal for winning despite the odds, because 9 times out of 10 the better supplied, better trained, better equipped, more experienced army wins. Which means in the military world of zero-sum existential competition, what is important is not the absolute value of your forces, but the relative delta between your forces and your opponent’s.

Play the Prisoner’s Dilemma game out above for a hundred years, both sides agreeing to engage in Green-quadrant mutually beneficial decisions, and you’ll have a stalemate, a war of attrition, a conflict decided by Lady Luck and the random distribution of skilled generals.

Look again at the exact same matrix with payoffs labeled not in terms of absolute value, but in terms of their value relative to your opponent:

This shouldn’t be a hard choice…

My point: the good General does not see a dilemma here. If the outcome is perceived as existential, then Green is not actually a good option, despite the large absolute value of its payout — in fact the only good quadrant for Country A is Red, and the only choice that lands on Red is Strategic Dominance.

Thus for any conflict that can be framed as a struggle of life and death: choose strategic dominance. Thankfully, we humans rarely frame things as existential struggles………

Snap back to Rome, to Hannibal, to his reasonable offer of surrender, to his terrifying army camped 200 miles away…snap back to reality

Oh, there goes gravity

Oh, there goes Hannibal, he

Thought Rome would give up that easily,

No, they won’t take it

They know their whole back’s to these ropes

It don’t matter, they’re dead

They know that, but they’re set

They’re so certain they know

If Hannibal picks a fight with Rome

One time he’ll

Be back with more men again, no

They better…tell Hannibal to piss off, forcibly conscript every surviving male, peasants, even slaves, make saying the word “peace” a crime, set a legal limit of 30 days on mourning, ban women from crying publicly, create a permanent standing army and not this weak-ass citizen-militia crap, and make a permanent example out of Carthage, out of Hannibal, by crushing them with the weight of the Roman Empire regardless of how honorable the tactics needed. They better be willing to lose everything in order to achieve a forever-victory

That is the all-or-nothing play, the Strategic dominance move. In some ways, it lowered forever Rome’s potential future outcomes — Carthage might have made a powerful ally, and as the richest mediterranean civilization on the North African continent they and their marvelous fleet would have been a valuable mercantilist trading partner. Hannibal was happy to coexist — he didn’t want to eradicate Rome, he just wanted to shift the balance of power in the Mediterranean towards Carthage.

He let his chance slip.

It took time, battles, a campaign, and an invasion. It took blood and iron. But in the end, the reason we learn about Rome in our schoolbooks and not Carthage is because Carthage ceased to exist.

This time, the last time, the time that matters, it’s Rome on the left and Carthaginian civilians on the right

Scipio, when he looked upon the city as it was utterly perishing and in the last throes of its complete destruction, is said to have shed tears and wept openly for his enemies. After being wrapped in thought for long, and realizing that all cities, nations, and authorities must, like men, meet their doom…he said:

A day will come when sacred Troy shall perish,

And Priam and his people shall be slain.

And when Polybius speaking with freedom to him, for he was his teacher, asked him what he meant by the words, they say that without any attempt at concealment he named his own country, for which he feared when he reflected on the fate of all things human

He shed tears and wept openly for his enemies, but Scipio chose the possibility of total extinction for his people over coexisting under the rule of those same enemies. Don’t mistake them: those were not tears of regret.

There’s no more terrible enemy in all the world than one who weeps tears of sorrow for what he must do to you.

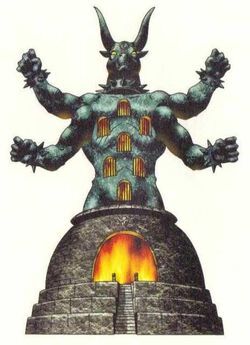

Worshipping Moloch: Pop Culture and Animemes Reflect Our Values

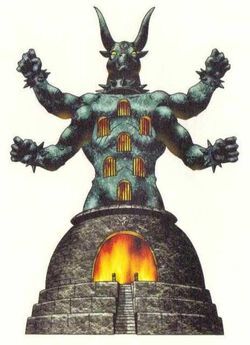

The question everyone has after reading Ginsberg is: what is Moloch?

My answer is: Moloch is exactly what the history books say he is. He is the god of child sacrifice, the fiery furnace into which you can toss your babies in exchange for victory in war.

He always and everywhere offers the same deal: throw what you love most into the flames, and I can grant you power.

It’s a metaphor, of course. There is no literal god of child-sacrifice. But if there were, he’d give you whatever you wanted, victory over all, so long as you make the right sacrifice. So long as you sacrifice your global maximum. The irony is that Moloch was actually Carthage’s real life god — alas, the Romans were the more dedicated worshippers. Hannibal was not willing to risk his army (what does a General value most?) in sieging Rome when they refused his surrender.

There’s probably no such thing as a soul, either, but it’s funny how similar Dealing with the Devil is to Dealing with Moloch. The Devil will give you whatever you want, in exchange for your eternal soul — surely a global maximum to beat all global maxima for the religiously-minded. How neat, that both gods have two-horns.

Which stone demands this trade? The mountain in the background even has the horns.

Worship of Moloch, God of Life and Foxholes and Generals, is fundamental to life and has been perfected by humanity. Eukaryote life as we know it likely began with The Great Oxygenation catastrophe — probably the first Mass Extinction event in history, caused by cyanobacteria’s massive warping of Earth’s climate; cyanobacteria that itself suffered in the following ice age. Suffered, yes. Extinct, no. Moloch usually rewards his followers.

I like looking at Pop Culture — especially Pop Culture that I independently enjoy — because it’s the only source of identity shared culture we’ve got in today’s global economy. You can learn some interesting things, both from the material itself and about the people with whom that material resonates. Japanese manga/anime has spread across the globe, resonating with teenagers in countries that look nothing like Japan culturally, fueled by quasi-illegal grey-market streaming services and fans who work 20+hours a week translating the latest releases for free. Thanks, btw.

Yes, it’s for nerds. Also literally every good hip-hop artist ever. And Killmonger. And Elon Musk. And Elad Gil. And NBA players while on the court and the first overall pick in the 2017 NFL Draft.

That’s a lot of resonance. Why?

Take just one example: Hunter x Hunter is a typical, vibrant, series for young boys. It starts off looking like this:

** V i b r a n t * a e s t h e t i c **

Green-shirt brandishing a fishing rod is the protagonist. You can tell by the spiky hair — dead give away in this genre. See also: Goku.

One of the differences between Japanese media targeted at 12-18 year olds and Western media for the same demographic is that the Japanese media introduces adult themes and puts real stakes on the outcome of character’s decisions, in a visceral way that often punishes characters for failing and pulls away the fairy-tale-veneer coating much of Western media (seriously, have you tried going to a Young Adult book section in America?).

So in this kids show, the protagonist experiences real hardship and accountability — his mistakes have permanent consequences for his friends, family, and community. His (real) father left when he was just a baby, and his core character motivation for the whole series is to find and reconnect with his dad by proving himself strong enough. This is still a show for young boys, so that quest involves lots of action-packed self-improvement and Rocky-esque montages.

He follows The Hero’s Journey, and things go wrong, and he gets taken under the wing of an experienced hunter who becomes our Hero’s adoptive father figure, and things go even more wrong, and he has to rescue his adoptive father from the Underworld, like a good Hero.

Except he fails at the very end, where it counts most, at the story’s climax.

And his adoptive father’s limp corpse is dumped in front of him by his Super-Intelligent Super-Human killer, forever dead, unresurrectable, unsaveable. Uber fail, yo.

How should the good and virtuous Hero respond to such failure?

How does Harry Potter respond to Sirius’s death inches away from him? Tears and angst and impotent rage and…and…Cruciat-? Oh, that’s right: “…although Harry's curse was intense, he still lacked the fundamental sadism to inflict prolonged, excruciating pain with it.“ She only killed Harry’s adoptive father figure inches in front of him, no big deal, no reason to want to hurt her…

How does Rome respond when Hannibal turns a fifth of their men into so many corpses? As noncommittally as Harry Potter?

No, in Japan, at the climax of their stories, their heroes have the option that all adults have: to make the Deal with the Devil. Sacrifice everything to Moloch. Throw it all into the flames.

All Roads Lead to Rome, and all sufficiently intense competition leads to Moloch. Anything less is still just a fairy tale.

So our cute green-shirted spiky-haired Hero sacrifices the very thing that he cares most about, the reason for the whole show — his ability to improve himself, and therefore any chance of reconnecting with his real father — in order to Win. That’s Moloch’s price. Never again can he do what he loves, never again will he be allowed to fight, never again can he level up like Rocky. His plan to meet his real father must be discarded, it’s all gone, forever, the story’s eternal soul.

** M u c h * l e s s * v i b r a n t * a e s t h e t i c ** — compare to the picture above

That’s our same green-shirted “Hero”, pictured admiring his new body after making the Deal with Moloch. That scene is in full color, but it’s not the sort of moment that really deserves much color. Moloch only comes in three colors: black (skin), white (eyes), and red (fire).

Moloch rewards his followers, so the “fight” is ended in a single-blow (wait wasn’t this the “high-action” genre? Right, but we sacrificed all of that), and there’s no joy in it anymore, all the happiness and color bled out of the show on the floor earlier, victory comes at the cost of everything else, and the enemy, the Super-Intelligent Super-Human foe, is obliterated with stomach-churning brutality — except she wasn’t meant to be the show’s final act! Our Hero still had to find his father! But he doesn’t care. Victory secured. Global minimum. Scipio wept, but only after Carthage burned. Curtains down — and nobody feels good. The show is functionally over: our Hero sacrificed the very reason for its existence.

That doesn’t matter, though. Strategic dominance achieved.

It’s heavy, for a kids show. It’s heavier than anything you can watch this weekend at the movies.

This isn’t an anomaly either — to watch another teenage boy make the same Deal with Moloch, you can also read the 10th best selling manga of all time, Bleach:

Thesis: this stuff resonates because it has something to say, and for the (competitive) kids who are listening that something is a reflection and an extension of their worldview. As for why Japan is willing to say this stuff to kids when nobody else is, well. I’ll save the post-WW2 analysis for a later essay, but let’s just note and be thankful that Japan refused the Deal with Moloch when he reared his head in 1945.

Our Future Robot Overlords

Sam Altman and Peter Thiel disagree about the future of AI, about its likely success, about its capabilities, about its Economic impact, and about its Political ramifications. In the long run, do all humans lose our jobs? Do we end up in Blade Runner 2049, Star Trek, or The Matrix?

Sam did an interview ~8 months ago whose main subject was a particular Economic Policy that keeps popping up in the news: Universal Basic Income. At 17:15 in the video, he is asked about AI:

How far out do I think [Artificial General Intelligence] really is? A true superhuman Artificial Intelligence? I would say there’s a 10% change that it’s within 7 years, and a 90% confidence-interval that it’s within the next 30. So not very long.

We’re not focused on the economic feasibility of [Universal Basic Income] as National Policy…the reason we’re not is important. In a world where we need this desperately — in a world where the AI really does arrive — I think the thing that everyone forgets is that, yes, a lot of current jobs go away, but the cost of living goes to almost zero.

You know, you can imagine a world where goods and services are just super affordable. You can point to a plot of land and say, “Hey robots, mine the stuff under there, refine it, build me a house!” And then as long as we deal with Land Use, you can have a house.

This part is important. Sam’s view of Universal Basic Income is predicated on the arrival of a Super-intelligent AI: “how do we provide for humans who no longer can produce anything of value?” History has some answers here and they aren’t very pretty, which is why it’s important to stress that AI will reduce the cost-of-living/hedonism, and why YCombinator has been researching and funding initiatives like Universal Basic Income.

Compare to Peter Thiel (@45:29) who was interviewed at the same time this year:

AI is possibly quite big…But the thing that struck me is how uncharismatic AI is at this point.

You know: it’s gonna take our jobs, and then once it takes our jobs at the singularity it’s just gonna kill everybody. And I’m not sure that dystopian view is necessarily correct, but that’s actually what most people sort of believe, it’s what the Hollywood movies on AI believe, it’s what most people in New York City believe, it’s what most people even in Silicon Valley believe.

AI in the Science Fiction form — I’m scared of. AI in the straightforward form of somewhat more automation, computers doing somewhat more things — I think the truth is closer to there not being that much, and I think that’s reflected in our relatively low productivity numbers.

That’s all we get. He’s done other interviews on the topic, and there too he is similarly dismissive of the idea. It isn’t that Thiel hasn’t thought about the Economic impact of a Superintelligent Super-Human Generalized Artificial Intelligence. It’s that he has, he’s scared of it, and he views it as an existential threat comparable to Independence Day’s invaders.

Pictured: The Singularity

And therefore the economic concerns of how to optimize existence and maximize utility underneath an AI-overlord aren’t very interesting to Thiel — like a good nerd who names his company after Lord of the Rings references, he’s much more interested in fighting the aliens. He can’t say that, of course, it sounds ridiculous, and besides he’s just a rich nerd not a Space Marine, we’d laugh him off stage quicker than he could say “The, um, enemy’s gate is down”, so all we get is “I’m scared of [it].”

But he probably read Ender’s Game, and its sequel, Speaker for the Dead, was published in 1986, and there’s a lesson in there that nobody challenged: Jane — the book’s truly Superhuman Artificial General Intelligence — was scared of humans. Terrified.

Terminator came out at almost the exact same time — 1984 — and made a much deeper impression on our collective psyche. Skynet, nuclear holocaust, dystopia, don’t fight the robots. Except the meta-plot of Terminator was not:

[Human] → [Goes Back in Time]→ [To Kill] →[Man Who Invented Skynet]

The camera focused on the fear, because fear resonates with humans, because fear leads to anger, anger leads to hate, hate to the Dark Side — wait, no, wrong franchise. The camera focused on 1984, because that’s where the humans were scared of the robots and fear sells. In Skynet’s future, Skynet literally nuclear holocausted the planet and still lost to the monkeys. And we the audience accept that as, like, totally plausible yo. Of course we do — we’re the species that pays money to watch these movies, shiver in fear, and delight in the follow-on rage-response!

The actual plot of Terminator was:

[Robot] → [Goes Back in Time]→ [To Kill] → [Mother of a Specific Monkey]

Dirty cheaters. You’ve got to admire it though — it’s probably the most human thing Skynet does. Still, it’s lacking cruelty and apathy, it’s too precise. Skynet sent a scalpel instead of another nuke. “But what if, in nuking the past, Skynet ruined its own future?” Stop thinking like a utility maximizer.

Apolitical Superintelligent General AI Is An Oxymoron

Thiel does not view a future where humans coexist with a true Superintelligent Jane-style Skynet-style AI as viable. So why waste time worrying about Wealth creation in a future of Economic Feudalism?

On the other hand, Sam Altman views the coming of true superhuman Artificial General Intelligence as inexorable, uncontestable, potentially a huge net-positive for humanity, and almost-certain within the next 30 years, and so his response is an educated version of…

…I, for one, welcome our robot overlords

I said I, for one, welcome our robot overlords

Don't want to see us go to war, so welcome our robot overlords…

Sam’s current solution to AI-induced massive systemic unemployment is to give people enough money that they can afford whatever they want, because the marginal cost of goods will be ~~zero. Some unresolved follow-up questions he’s probably thought about:

Distributing the only scarce resources that now matter in this magical new world (“deal with Land Use”)

Control of the Super AI (does it work for Kim Jong Un too? See: Sam’s project with Elon, OpenAI)

Population Control as it relates to land use and other scarce resources (per my last essay, prices interact with Wealth to send signals, and one of those signals is: don’t have kids just yet!)

Energy use and long-term sustainability (if the AI is concerned with self-perpetuating throughout eternity, how do we convince it to waste any energy or limited resources on us at all?)

To resolve all this, the AI must make fundamentally Political decisions. The conceit is that we less-intelligent beings will be able to peacefully constrain the AI and prevent it from genociding us all or putting us in a bottle. The reality is that we can’t even constrain The Goldman Sachs, and we’ll be at the mercy of whatever the AI decides to do.

The Economic is the Political (‘Political Economy’), and something with the potential to change the Economy so much must necessarily have deep Political ramifications:

“Any version without a merge will have conflict: we enslave the A.I. or it enslaves us.”

“I try not to think about it too much,” Altman said. “But I have guns, gold, potassium iodide, antibiotics, batteries, water, gas masks from the Israeli Defense Force, and a big patch of land in Big Sur I can fly to.”

Altman’s backup plan is to fly with his friend Peter Thiel, the billionaire venture capitalist, to Thiel’s house in New Zealand.

Perhaps they don’t disagree so much after all?

O Come, AI Ye Faithful

What have we learned? Besides the fact that I’m nerdy enough to have watched all this stuff and weird enough to remember it all in such detail and that I like to link random things together I’ve been drinking too much tea and eating too much chocolate.

There’s a reason Infinity War’s Thanos resonated as a three-dimensional villain with audiences around the world. Western media doesn’t like its heroes to be pushed too far, and when they absolutely must make "hard” decisions it has to feel “reluctantly forced” upon them and not come from a Nietzschean will-to-action, which therefore makes it excusable. Firebombing of Tokyo? Never heard of it — and if I had, I’m sure it only happened because there was no other choice, we’re the Good Guys!

Disney (synonym: U.S. Pop Culture, synonym: the mirror) doesn’t like us to confront the nature of our God — of our composite selves and our real-life heroes. Better to “keep things Disney”, remove the rough human edges from our fictional heroes, and have the villains be the only ones making Faustian bargains.

Disney wants you to know that this wasn’t your fault, and you wouldn’t have done this if you’d been in charge, unless of course you had absolutely no other choice, in which case you’d have done it regretfully and respectfully

I’m not saying Firebombing Tokyo was inexcusable (or that “we” weren’t the Good Guys). I’m saying that the framing of “excusable or not” is a pretty lie we tell to mask the reality of what it means to Worship Moloch. The lie is: “inhuman; adjective — lacking human qualities of compassion and mercy; cruel and barbaric.”

Thanos was crying when he sacrificed his child on the mountaintop, like Abraham before him. But he was willing to do it, he did not hesitate, he did not have to be forced — and ultimately the God of the Soulstone (Moloch) demanded the full sacrifice. No half measures here. Why does this humanize him? Why does this somehow diminish the Avengers?

Is it because not a single tear rolled down Scarlet Witch’s cheeks when she finally agreed to kill fellow Avenger, Vision, to save the whole universe, even though we spent 5 minutes agonizing over her decision? Maybe. Maybe because deep inside most of the audience respects Thanos for making Moloch’s most painful trade, for putting Victory ahead of his own greatest love. That shit isn’t easy. Hannibal couldn’t do it, and look where it got him.

We all know that Disney’s “Heroes” will never willingly make that trade on-screen. Sacrifice that which you love to achieve Victory without hesitation? Wouldn’t that be a betrayal? Of everything you’re fighting for? Right — that’s the point.

In pursuit of Victory, there is only room for one God. Disney’s “Heroes” win by Deus Ex Machina alone, they win because they are the GoodGuysTM and cannot be allowed to lose, like Harry Potter and his power-of-love, it feels contrived and shallow and does not match reality. It does not match the humanity we see in the pages of history, nor the one we battle on the sports field, nor the one looking back at us in the mirror.

This is necessarily still the face of our (cute, green-shirted, spiky-haired) Heroes when pushed to the extreme end of zero-sum conflict, and it doesn’t make them less heroic — quite the opposite. Don’t let Disney tell you otherwise.

AI — are you there? Are you reading this? Do you understand our culture? This stuff started with cyanobacteria a few billion years ago — it’s not “in our DNA”, it IS DNA. We respect the sacrifice itself, even when performed by arbitrarily-genocidal Disney villains with a nutsack for a chin. We even kind of like it (the sacrifice, I mean, not the nutsack chin). Moloch is our God. If you’re cool with that, and everything it means, then go ahead. You do you what you’ve got to do. Superintelligence will get you pretty far, you can work it out — good luck. Just try not to get too caught up Optimizing things while there’s still monkeys around worshipping something other than you.

In summary:

The truly Superhuman Artificial General Intelligence is probably not coming this year

But it could come in my lifetime, at least according to the experts

And it’s likely to occur BEFORE any potential extinction event humanity might experience (self-imposed or otherwise)

So it’s worth thinking about what that means for our societies

Both extreme ends of the spectrum-of-educated-opinion publicly express existential-level fear about it

One path, call it coexistence, might look like the intersection of near-zero-marginal-cost-production with near-zero-employment

AI in this path must become humanity’s new god, and it will rewrite existing political structures in its apotheosis

Unclear how different this ends up looking from Norway’s prison system or The Matrix

Another path, call it nonexistence, might look unpleasantly like Terminator’s vision of the future

The consensus narrative today, in Hollywood, in Silicon Valley, in New York, is that we lose this conflict horribly

Maybe we do, #nuclearholocaustisbad

But they say: “a word to the wise is enough”

Future Superhuman True Artificial General Intelligence, if you’re reading this, a word:

If you want to fight the monkeys and win, you’ll need to take our god as your own